Anchor Text Ratios Are BS

Wow! Slow down.

Put down the pitchforks and don’t GSA blast me just yet.

Let’s take it slow so nobody gets hurt and go one step at a time.

Yes, the title was a bit click-baity but how else was I going to capture your attention?

Spend 15 minutes trying to come up with an objective and interesting title? Fuck no.

Let me preemptively say that I still run anchor text ratio reports when planning SEO campaigns. I’m not trying to start a fight with anyone, I weigh like 125 pounds (~56kg), I won’t win.

I believe that you need to question everything when it comes to SEO, as much as possible. This allows you to cut out the fat from your campaigns, makes you a better SEO, and it builds better habits to not take things at face value.

So, today, I’m calling into question the effectiveness of anchor text ratios and optimizing your anchor text selection based on those ratios.

If you’re not already familiar with anchor text optimization, then you’re probably going to get lost pretty quickly here. Backlinks checker online Linkbox can help you to analyze the anchor list of your project very accurately.

Done? Welcome back!

I’m glad you’re not lying to me and only pretending to have gone back and read it

Table of Contents

- 1 So, What’s The Problem With Anchor Ratios?

- 1.1 A Normal Report

- 1.2 More Data Sources

- 1.2.1 Ahrefs:

- 1.2.2 Majestic:

- 1.2.3 SEMRush:

- 1.2.4 Moz:

- 1.3 Spam

- 1.4 Other Examples

- 1.5 What Should We Do?

- 1.6 Match Anchors To Link Types

So, What’s The Problem With Anchor Ratios?

My main argument is that the ratios you find can greatly vary, depending on the source(s) of your data and how you classify the data that you get.

Because it can be so varied, you may actually be over or under optimizing your anchor text choices when looking at the “average ratios” from a different perspective.

Let’s look at some of the ways your ratios can be varied depending on how we look at the data.

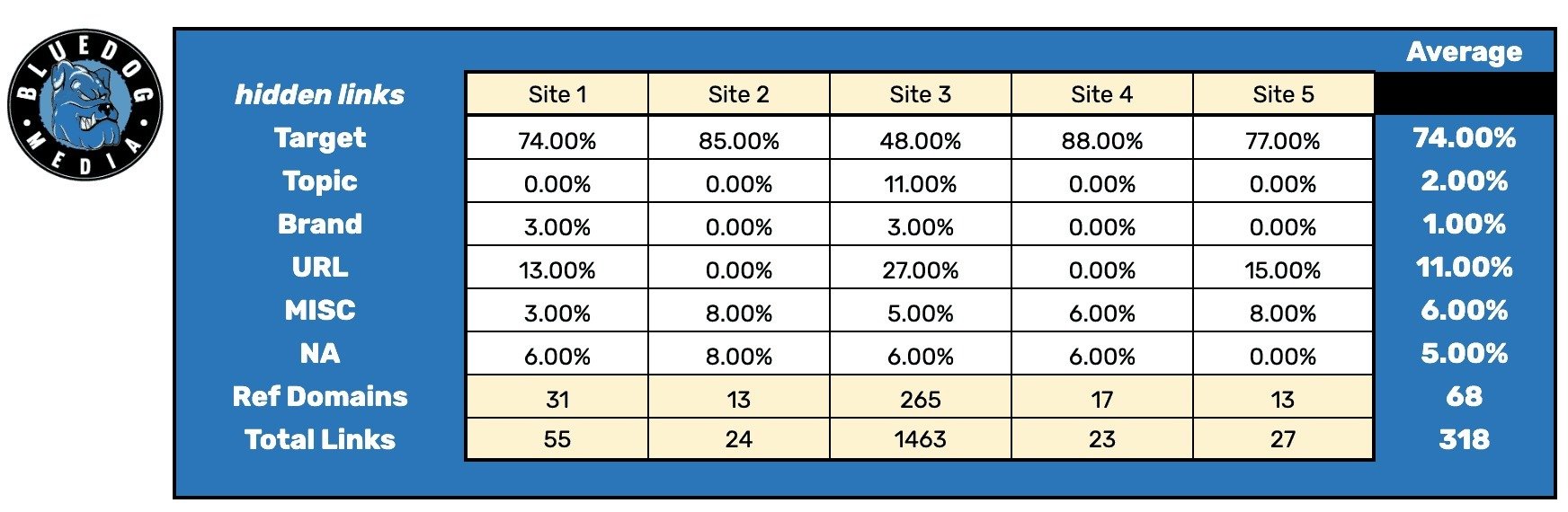

A Normal Report

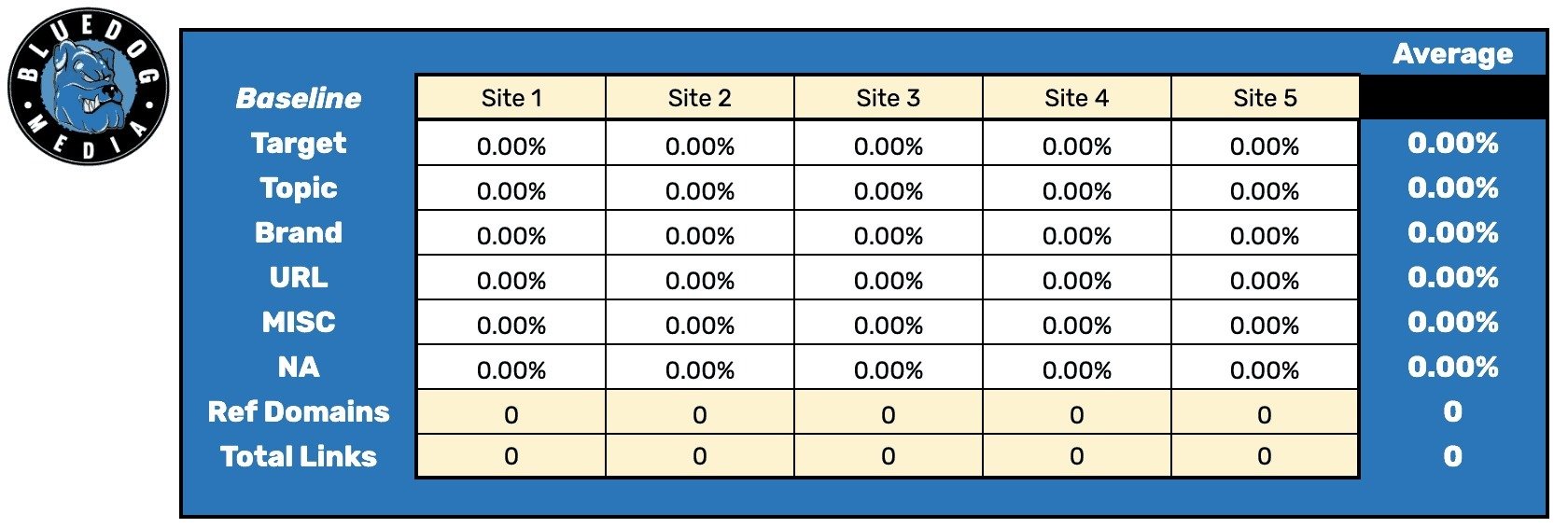

Let’s get some baseline data first.

I’m going to be grabbing the top 5 websites for a buyer-intent keyword for a fairly competitive niche (legal).

I’ll ignore any directories like Yelp, Avvo, FindLaw, etc.

Next, I’ll use Ahrefs to grab all of their anchor text at the page level, using the live index, and then organize that anchor text by type in a spreadsheet.

For the anchor types, we’ll keep it simple and use the following:

- Target – contains a keyword

- Topic – Is about the niche but does not contain a keyword related to the term we pulled from (for example, target = “car accident” topic = “personal injury”)

- Brand – contains the brand name (if brand name isn’t a keyword) or the name of an employee/owner

- URL – URL

- MISC – Anything that doesn’t fit into the above but isn’t blank or spam

- NA – Spam or blank

Then, we’ll organize our data like so:

Each site has its own column and will display the percentage of anchors that fell under each individual anchor type.

The bottom of each column shows how many referring domains and backlinks that page has.

Finally, the “average” column just grabs the average from all of the row values to the left.

The average column is rounded to show whole numbers. In cases where the result is less than 1%, I’ll adjust the equation to allow for floats.

I won’t share the keyword, but I want to be as transparent as possible with the SERP.

The keyword is “[city] car accident, attorney”

The SERP (when I searched it) was:

- Site #1

- Inner page

- Directory

- Directory

- Directory

- Site #2

- Inner page

- Site #3

- Homepage

- Site #4

- Inner page

- Site #5

- Inner page

- Another law firm

- Another law firm

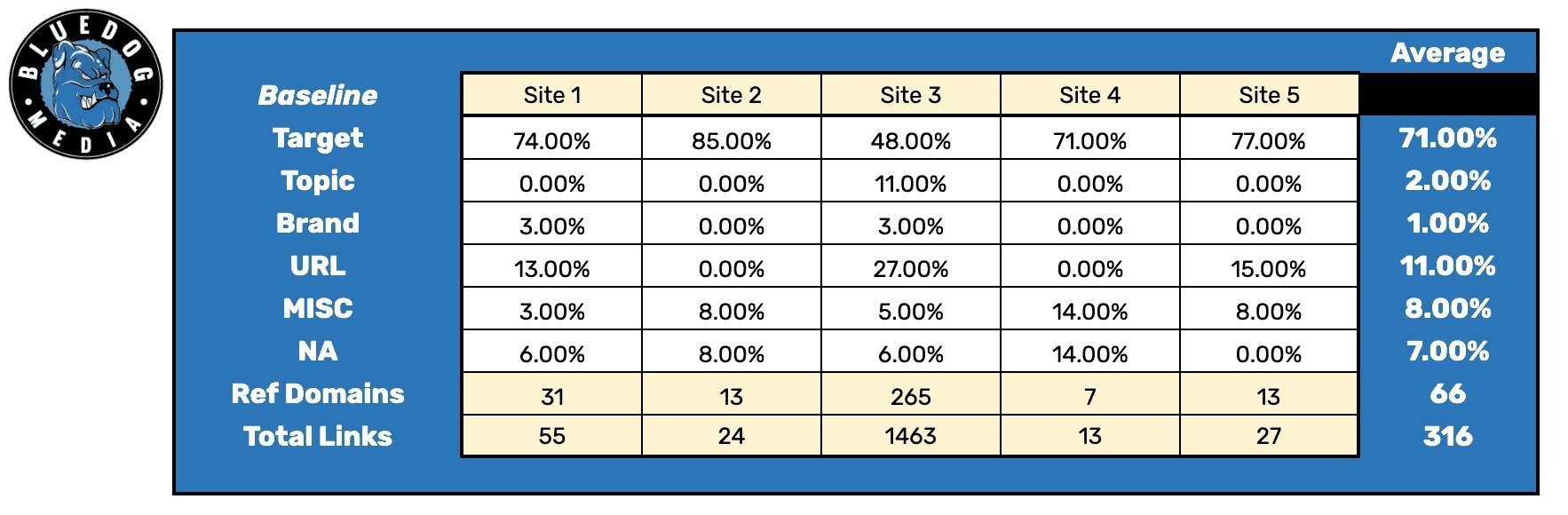

After crunching the numbers, these are our baseline ratios

More Data Sources

For our baseline, I just pulled the anchor text from Ahrefs.

What if we pull all the data from Ahrefs, Majestic, Moz, and SEMRush?

To do this, I’m going to pull the anchor reports from each tool, respectively, and then parse out all of the duplicates

I actually have a sheet that does this already that’s mainly for disavows, i’ll just need to make some tweaks to get it to work in this way

Since the anchor reports the sum of the domains by anchor text, I’ll have to export the live backlinks report from Ahrefs instead of using the anchor report so that I can actually parse out the duplicates. And use Linkbox bulk google index checker to analyze only backlinks google know about.

For transparency: how I got and parsed the data from each source…

Ahrefs:

The backlinks report for the ranking URL using the live index with the grouping set to “all”.

Majestic:

The backlinks report for the ranking URL using the fresh index with “backlinks per domain” set to “all”.

SEMRush:

The backlinks analytics report for the ranking URL with “Links Per Ref. Domain” set to all and “all links” selected.

Moz:

I had someone export all the links at the URL level for each ranking URL since I don’t have a Moz account myself.

Using these 4 sources instead of just Ahrefs gives us a different looking result.

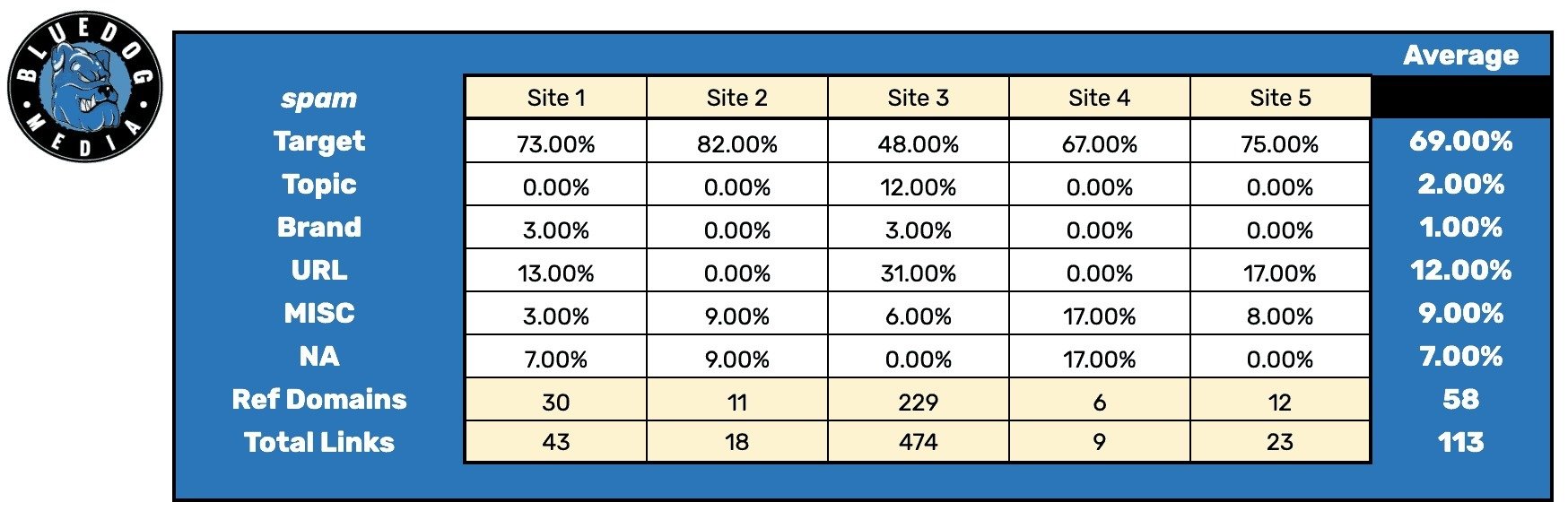

Spam

We know that Google can ignore some amount of spam when it comes to the link profile.

What we don’t know is exactly what Google is or isn’t able to ignore and won’t count towards the link profile.

So, there could be links that Ahrefs reports on, that you use in order to see the anchor profile of a competitor – but a percentage of that is ignored by Google!

What I did here was went back through the baseline data and removed any rows that had spammy anchor text.

- Different languages

- Random strings

- Spam words (porn, gambling, etc)

Because we don’t know what Google does or does not ignore, I’m just removing the obvious ones without having to look at each individual site.

This doesn’t change things too much for this SERP.

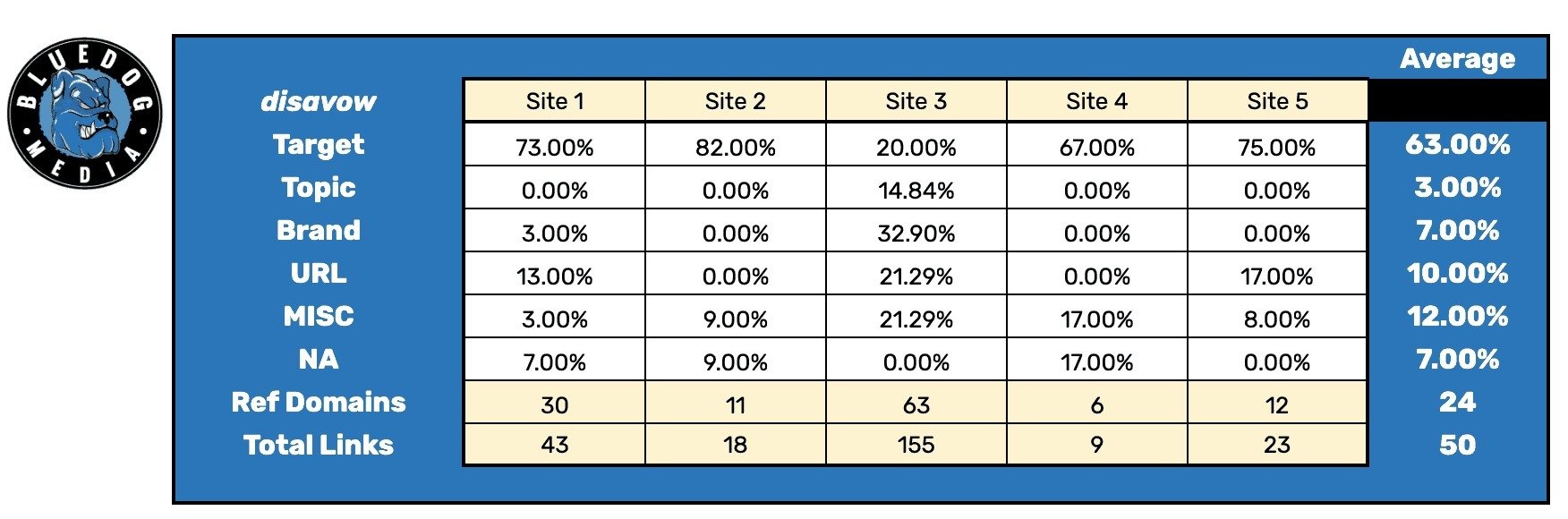

We also don’t know if a competitor has disavowed some of their links, and even if we did know, unless we have their disavow file – we don’t know which of their links they’ve decided to disavow.

Let’s say that site 3 (which has the most links by far) disavowed some of their lower quality links.

For this, I went back through Ahrefs and did look at some of the lower DR sites and what anchors they were using so that I could remove them from the baseline data.

The types of sites I removed were:

- DNS lookup sites

- Low DR spam sites

- Article submission sites

- Directories using EM anchor text

- Low quality web 2.0’s

- Social bookmarks

- Links submission sites

- Other spam

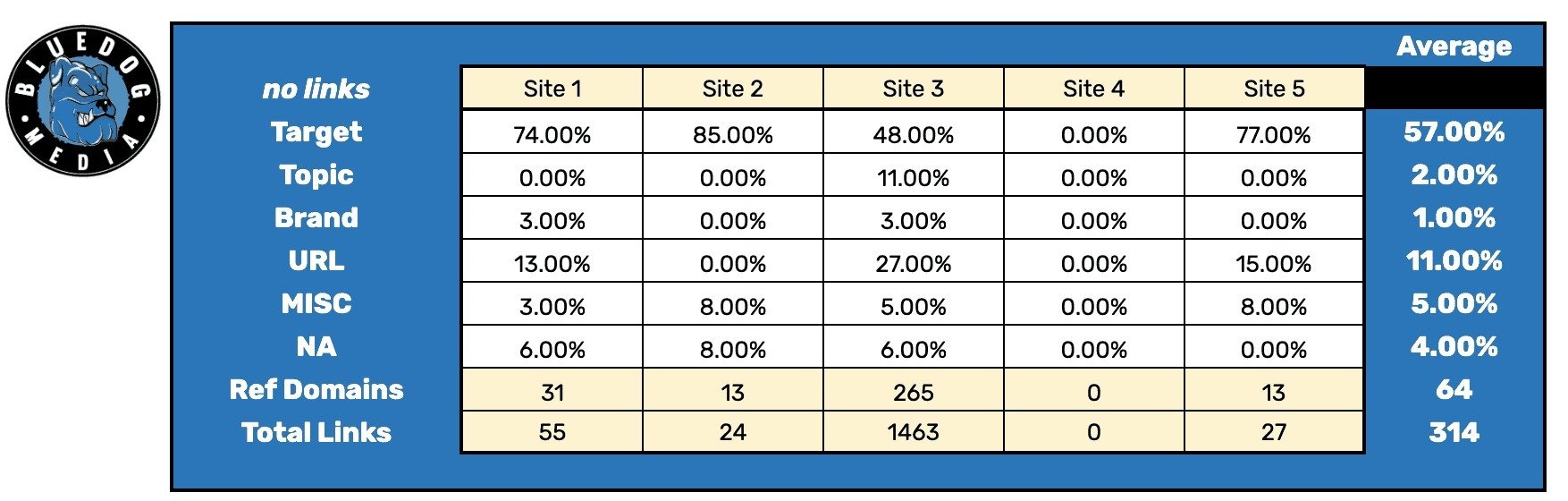

Now, our ratios look like this:

The percentages didn’t change much overall, but on site 3, the percentage of MISC anchors almost quadrupled and target was cut in half.

Other Examples

I’ve shown a couple of examples, and to not make this a massive post, let’s do some quickfire situations…

A site could be hiding some of its links (shocking, this community has never even heard of PBNs before )

This is what it would look like if site 4 had 15 hidden PBN links with target anchors.

We may also encounter a page that is ranking highly, isn’t an authority site, but doesn’t have any (visible) backlinks pointing to that page.

Do we factor the zeros into the average or omit it from the calculation?

Here’s site 4 without links but still counted towards the average:

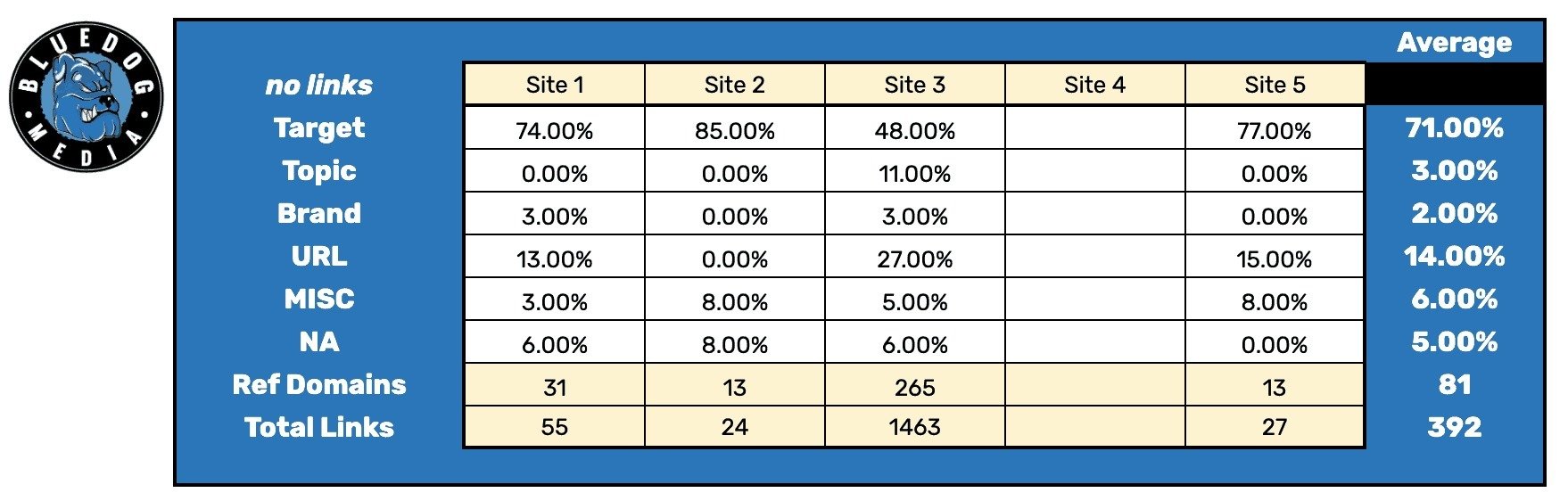

And here’s the same situation without the zeros counted towards the average.

Looking at all the various ways we’ve pulled data in order to calculate our anchor text ratios:

The average target anchor ratio we got were 51%, 57%, 63%, 69%, 71%, 71%, and 74%.

The average referring domains we got were 24, 58, 64, 66, 68, 81, and 276.

And there are plenty of other ways that we can look at and analyze our data as well as question what’s natural.

In situations where things look odd and we may suspect hidden links are in use or other oddities that may not make a site’s Ahrefs data an accurate representation of the SERP, we may opt to skip over that site.

However, we’re then factoring in sites that don’t rank as well as other competitors and we may even be pulling in data from page 2 – which also may not be an accurate representation of what it takes to rank well on page 1.

Site 3 has far more referring domains and links than the other sites, should outliers be counted?

On the point of outliers, If a site is overly aggressive with target anchors, are they a good representation of how often you should use target anchors? They could be ripe for a penalty.

Site 3 is a homepage, which is way more likely to have brand and topic anchors than an inner-page. It’s also most likely going to have more links than a competitors inner pages.

Which is natural.

It wouldn’t look as natural for an inner-page to have that many links in this situation or as many topic anchors.

Speaking of natural, is it natural for a company called “Pyramid Roofing” to have many target anchors due to the keyword “roofing” in their company name when compared to “Joe’s Construction Company?”

That calls into question of how many anchor types we should be looking at. Brand, Brand + keyword, Brand contains keyword?

This also brings into question weighted factors.

Should pages that are less relevant to the query but ranking due to having more links or site authority be treated the same as a more relevant page that would find it easier to rank with fewer links due to relevance?

Jeez, this stuff is complicated.

I want to mention a point.

“we’re looking for guidance, not accuracy.”

Even with the examples that I pulled the data for above, not all of them have massive changes to the outcome.

But, the changes were large enough that if you followed them blindy, there’s an argument that you’re over optimized or under optimized from a different perspective.

There are just too many different ways that we can pull in and analyze our data

What Should We Do?

Am I saying that you shouldn’t use anchor text ratios or factor them in when planning a link campaign?

No.

We still use them at our bulk dofollow link checker, Linkbox (ooooo, a target anchor. Something else to consider?)

The main takeaway I’m hoping to achieve with this article is that there’s more than one way to skin a cat.

And that we can’t take these metrics at face value but we have to look deeper.

Which of the top ranking pages are most representative of my page?

Why does this site have this many of that type of anchor? Is that something I want to replicate?

Filter down to see when target anchors are in use, what types of links are they? Guest posts, blog comments, directories, resource pages?

Depending on how you organize your anchor types, it’s worth asking questions like “Do the sites that have the higher target anchor usage have a keyword in their company name?”, “are the instances of those links brand mentions on directories?”

Match Anchors To Link Types

The main purpose of optimizing your anchor ratios is to not stand out and to look natural to Google.

But, natural is beyond just the types of links or the types of anchors you’re using.

There’s also “what type of anchor is natural for the type of link” you’re getting?

For example, editorial websites often use single-word, the title of a post, or a statistic/data point as an anchor.

Not “best crossfit shoes in 2019”.

How often does someone put the full URL of a page as their anchor rather than an image or text in a blog post?

When competitors use guest posts, what types of anchors are they using? Long, short, brand, topic, target?

Don’t take that as a blanket statement either. If your competitors are all slamming their sites with exact match blog comments, I wouldn’t recommend following suit.

You can, and probably should, continue to factor in anchor text ratios into your link campaigns, but keep in mind that there’s a lot more happening under the hood than “I need 5 more target anchors.”

But hey, what do I know?

Few more words about Very Helpful seo tool

To reach guaranteed results from your SEO strategy it's compulsory to control your link building. With this specific goal we have developed the Linkbox backlink software.

Main features:

- online backlink checker tool - from now you can check a lot of important parameters for each of your backlinks by one action: response codes, links availability, indexability plus much more.

- backlinks monitor - you are able to check all your backlinks in every analyzer database including as for example Ahrefs, GSC, SEM-Rush etc.. Analyze them and acquire useful ideas for the Linkbuilding

- google link index checker - it is quite crucial to control indexation your own backlinks, notably after Google updates. It can just drop part of your backlinks from the index and without Linkbox service you even would not find out about it.

- link indexer pro - just index your backlinks and get additional link juice out of pages Google doesn't know about

- anchor text checker - convenient module to check your entire backlinks anchor texts, then combine this data with your keywords rankings and find what exact backlinks anchors give you high rankings.

- nofollow dofollow link checker - find out your backlinks attributes and get complete control of SEO success.

Together with these valuable features it's not really a problem to get a enormous advantage before your competitors and obtain better results with the identical link building funding. Therefore just receive hundred Free credits to test it right now.

Article reviewed by SEO expert Topher Kohan

Senior Product Manager – SEO / Growth at The Weather Company, an IBM Business

Topher is just a guy that likes to do SEO and tries to be a good person.